Microsoft

Microsoft AZ-305

282+ Practice Questions with AI-Verified Answers

Designing Microsoft Azure Infrastructure Solutions

AI-Powered

Triple AI-Verified Answers & Explanations

Every Microsoft AZ-305 answer is cross-verified by 3 leading AI models to ensure maximum accuracy. Get detailed per-option explanations and in-depth question analysis.

Exam Domains

Practice Questions

HOTSPOT - You plan to deploy an Azure web app named App1 that will use Azure Active Directory (Azure AD) authentication. App1 will be accessed from the internet by the users at your company. All the users have computers that run Windows 10 and are joined to Azure AD. You need to recommend a solution to ensure that the users can connect to App1 without being prompted for authentication and can access App1 only from company-owned computers. What should you recommend for each requirement? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point. Hot Area:

The users can connect to App1 without being prompted for authentication: ______

The users can access App1 only from company-owned computers: ______

You are designing a large Azure environment that will contain many subscriptions. You plan to use Azure Policy as part of a governance solution. To which three scopes can you assign Azure Policy definitions? Each correct answer presents a complete solution. NOTE: Each correct selection is worth one point.

HOTSPOT - You need to design a storage solution for an app that will store large amounts of frequently used data. The solution must meet the following requirements: ✑ Maximize data throughput. ✑ Prevent the modification of data for one year. ✑ Minimize latency for read and write operations. Which Azure Storage account type and storage service should you recommend? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point. Hot Area:

Storage account type: ______

Storage service: ______

You are designing an application that will be hosted in Azure. The application will host video files that range from 50 MB to 12 GB. The application will use certificate-based authentication and will be available to users on the internet. You need to recommend a storage option for the video files. The solution must provide the fastest read performance and must minimize storage costs. What should you recommend?

You have an Azure subscription that contains a custom application named Application1. Application1 was developed by an external company named Fabrikam, Ltd. Developers at Fabrikam were assigned role-based access control (RBAC) permissions to the Application1 components. All users are licensed for the Microsoft 365 E5 plan. You need to recommend a solution to verify whether the Fabrikam developers still require permissions to Application1. The solution must meet the following requirements: ✑ To the manager of the developers, send a monthly email message that lists the access permissions to Application1. ✑ If the manager does not verify an access permission, automatically revoke that permission. ✑ Minimize development effort. What should you recommend?

Want to practice all questions on the go?

Download Cloud Pass — includes practice tests, progress tracking & more.

You have an Azure subscription. The subscription has a blob container that contains multiple blobs. Ten users in the finance department of your company plan to access the blobs during the month of April. You need to recommend a solution to enable access to the blobs during the month of April only. Which security solution should you include in the recommendation?

You have an Azure Active Directory (Azure AD) tenant that syncs with an on-premises Active Directory domain. You have an internal web app named WebApp1 that is hosted on-premises. WebApp1 uses Integrated Windows authentication. Some users work remotely and do NOT have VPN access to the on-premises network. You need to provide the remote users with single sign-on (SSO) access to WebApp1. Which two features should you include in the solution? Each correct answer presents part of the solution. NOTE: Each correct selection is worth one point.

You have an Azure Active Directory (Azure AD) tenant named contoso.com that has a security group named Group1. Group1 is configured for assigned membership. Group1 has 50 members, including 20 guest users. You need to recommend a solution for evaluating the membership of Group1. The solution must meet the following requirements: ✑ The evaluation must be repeated automatically every three months. ✑ Every member must be able to report whether they need to be in Group1. ✑ Users who report that they do not need to be in Group1 must be removed from Group1 automatically. ✑ Users who do not report whether they need to be in Group1 must be removed from Group1 automatically. What should you include in the recommendation?

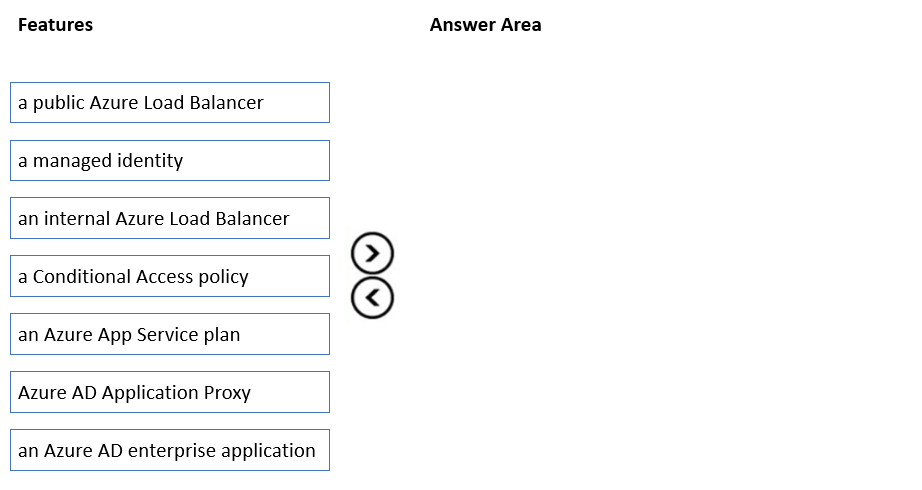

DRAG DROP - Your on-premises network contains a server named Server1 that runs an ASP.NET application named App1. You have a hybrid deployment of Azure Active Directory (Azure AD). You need to recommend a solution to ensure that users sign in by using their Azure AD account and Azure Multi-Factor Authentication (MFA) when they connect to App1 from the internet. Which three features should you recommend be deployed and configured in sequence? To answer, move the appropriate features from the list of features to the answer area and arrange them in the correct order. Select and Place:

Select the correct answer(s) in the image below.

You need to recommend a solution to generate a monthly report of all the new Azure Resource Manager (ARM) resource deployments in your Azure subscription. What should you include in the recommendation?

You have an Azure subscription that contains an Azure Blob Storage account named store1. You have an on-premises file server named Server1 that runs Windows Server 2016. Server1 stores 500 GB of company files. You need to store a copy of the company files from Server1 in store1. Which two possible Azure services achieve this goal? Each correct answer presents a complete solution. NOTE: Each correct selection is worth one point.

Your company has the infrastructure shown in the following table.

The on-premises Active Directory domain syncs with Azure Active Directory (Azure AD). Server1 runs an application named App1 that uses LDAP queries to verify user identities in the on-premises Active Directory domain. You plan to migrate Server1 to a virtual machine in Subscription1. A company security policy states that the virtual machines and services deployed to Subscription1 must be prevented from accessing the on-premises network. You need to recommend a solution to ensure that App1 continues to function after the migration. The solution must meet the security policy. What should you include in the recommendation?

Location: Azure - Resource: Azure subscription named Subscription1

Location: Azure - Resource: 20 Azure web apps

Location: On-premises datacenter - Resource: Active Directory domain

Location: On-premises datacenter - Resource: Server running Azure AD Connect

Location: On-premises datacenter - Resource: Linux computer named Server1

You have an on-premises network and an Azure subscription. The on-premises network has several branch offices. A branch office in Toronto contains a virtual machine named VM1 that is configured as a file server. Users access the shared files on VM1 from all the offices. You need to recommend a solution to ensure that the users can access the shared files as quickly as possible if the Toronto branch office is inaccessible. What should you include in the recommendation?

HOTSPOT - You plan to deploy Azure Databricks to support a machine learning application. Data engineers will mount an Azure Data Lake Storage account to the Databricks file system. Permissions to folders are granted directly to the data engineers. You need to recommend a design for the planned Databrick deployment. The solution must meet the following requirements: ✑ Ensure that the data engineers can only access folders to which they have permissions. ✑ Minimize development effort. ✑ Minimize costs. What should you include in the recommendation? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point. Hot Area:

Databricks SKU: ______

Cluster configuration: ______

You need to recommend a solution to meet the database retention requirements. What should you recommend?

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution. After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen. Your company deploys several virtual machines on-premises and to Azure. ExpressRoute is deployed and configured for on-premises to Azure connectivity. Several virtual machines exhibit network connectivity issues. You need to analyze the network traffic to identify whether packets are being allowed or denied to the virtual machines. Solution: Use Azure Traffic Analytics in Azure Network Watcher to analyze the network traffic. Does this meet the goal?

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution. After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen. Your company deploys several virtual machines on-premises and to Azure. ExpressRoute is deployed and configured for on-premises to Azure connectivity. Several virtual machines exhibit network connectivity issues. You need to analyze the network traffic to identify whether packets are being allowed or denied to the virtual machines. Solution: Use Azure Advisor to analyze the network traffic. Does this meet the goal?

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution. After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen. Your company deploys several virtual machines on-premises and to Azure. ExpressRoute is deployed and configured for on-premises to Azure connectivity. Several virtual machines exhibit network connectivity issues. You need to analyze the network traffic to identify whether packets are being allowed or denied to the virtual machines. Solution: Use Azure Network Watcher to run IP flow verify to analyze the network traffic. Does this meet the goal?

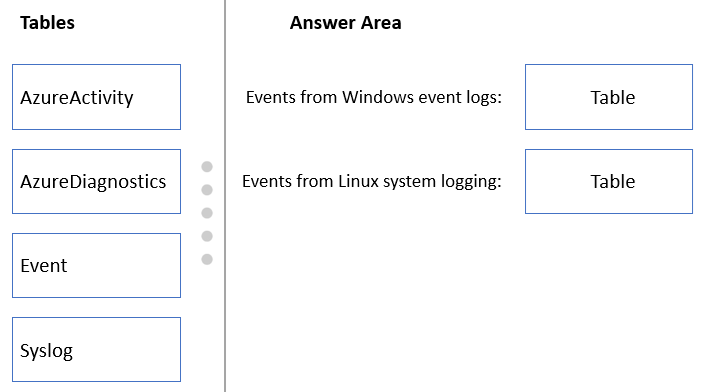

DRAG DROP - You have an Azure subscription. The subscription contains Azure virtual machines that run Windows Server 2016 and Linux. You need to use Azure Monitor to design an alerting strategy for security-related events. Which Azure Monitor Logs tables should you query? To answer, drag the appropriate tables to the correct log types. Each table may be used once, more than once, or not at all. You may need to drag the split bar between panes or scroll to view content. NOTE: Each correct selection is worth one point. Select and Place:

Select the correct answer(s) in the image below.

You are designing an application that will aggregate content for users. You need to recommend a database solution for the application. The solution must meet the following requirements: ✑ Support SQL commands. ✑ Support multi-master writes. ✑ Guarantee low latency read operations. What should you include in the recommendation?

Other Microsoft Certifications

Microsoft AI-102

Associate

PL-300: Microsoft Power BI Data Analyst

Microsoft AI-900

Fundamentals

Microsoft SC-200

Associate

Microsoft AZ-104

Associate

Microsoft AZ-900

Fundamentals

Microsoft SC-300

Associate

Microsoft DP-900

Fundamentals

Microsoft SC-900

Fundamentals

Microsoft AZ-204

Associate

Microsoft AZ-500

Associate

Get the app