Microsoft

Microsoft AZ-500

442+ Practice Questions with AI-Verified Answers

Microsoft Azure Security Engineer Associate

AI-Powered

Triple AI-Verified Answers & Explanations

Every Microsoft AZ-500 answer is cross-verified by 3 leading AI models to ensure maximum accuracy. Get detailed per-option explanations and in-depth question analysis.

Exam Domains

Practice Questions

HOTSPOT - You have a network security group (NSG) bound to an Azure subnet. You run Get-AzNetworkSecurityRuleConfig and receive the output shown in the following exhibit. Name : DenyStorageAccess Description : Protocol : * SourcePortRange : {} DestinationPortRange : {} SourceAddressPrefix : {*} DestinationAddressPrefix : {Storage} SourceApplicationSecurityGroups: [] DestinationApplicationSecurityGroups: [] Access : Deny Priority : 105 Direction : Outbound

Name : StorageE2A2Allow ProvisioningState : Succeeded Description : Protocol : * SourcePortRange : {} DestinationPortRange : {443} SourceAddressPrefix : {} DestinationAddressPrefix : {Storage.EastUS2} SourceApplicationSecurityGroups: [] DestinationApplicationSecurityGroups: [] Access : Allow Priority : 104 Direction : Outbound

Name : Contoso_FTP Description : Protocol : TCP SourcePortRange : {*} DestinationPortRange : {21} SourceAddressPrefix : {1.2.3.4/32} DestinationAddressPrefix : {10.0.0.5/32} SourceApplicationSecurityGroups: [] DestinationApplicationSecurityGroups: [] Access : Allow Priority : 504 Direction : Inbound

Use the drop-down menus to select the answer choice that completes each statement based on the information presented in the graphic. NOTE: Each correct selection is worth one point. Hot Area:

Traffic destined for an Azure Storage account is ______.

FTP connections from 1.2.3.4 to 10.0.0.10/32 are ______.

You create a new Azure subscription. You need to ensure that you can create custom alert rules in Azure Security Center. Which two actions should you perform? Each correct answer presents part of the solution. NOTE: Each correct selection is worth one point.

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution. After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen. You have an Azure subscription named Sub1. You have an Azure Storage account named sa1 in a resource group named RG1. Users and applications access the blob service and the file service in sa1 by using several shared access signatures (SASs) and stored access policies. You discover that unauthorized users accessed both the file service and the blob service. You need to revoke all access to sa1. Solution: You create a new stored access policy. Does this meet the goal?

HOTSPOT - You have an Azure subscription that contains the virtual machines shown in the following table. Name Resource group Status VM1 RG1 Stopped (Deallocated) VM2 RG2 Stopped (Deallocated) You create the Azure policies shown in the following table.

You create the resource locks shown in the following table.

For each of the following statements, select Yes if the statement is true. Otherwise, select No. NOTE: Each correct selection is worth one point. Hot Area:

Not allowed resource types: Resource type: virtualMachines, Scope: RG1

Allowed resource types: Resource type: virtualMachines, Scope: RG2

Lock1 is Read-only and created on VM1.

Lock2 is Read-only and created on RG2.

You can start VM1.

You can start VM2.

You can create a virtual machine in RG2.

From Azure Security Center, you create a custom alert rule. You need to configure which users will receive an email message when the alert is triggered. What should you do?

Want to practice all questions on the go?

Download Cloud Pass — includes practice tests, progress tracking & more.

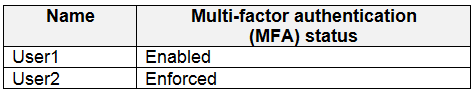

HOTSPOT - Your company has two offices in Seattle and New York. Each office connects to the Internet by using a NAT device. The offices use the IP addresses shown in the following table. Location IP address space Public NAT segment Seattle 10.10.0.0/16 190.15.1.0/24 New York 172.16.0.0/16 194.25.2.0/24 The company has an Azure Active Directory (Azure AD) tenant named contoso.com. The tenant contains the users shown in the following table.

The MFA service settings are configured as shown in the exhibit. (Click the Exhibit tab.) trusted ips (learn more)

☑️ Skip multi-factor authentication for requests from federated users on my intranet

Skip multi-factor authentication for requests from following range of IP address subnets

10.10.0.0/16 194.25.2.0/24

verification options (learn more)

Methods available to users: ☑️ Call to phone ☑️ Text message to phone ⬜ Notification through mobile app ⬜ Verification code from mobile app or hardware token For each of the following statements, select Yes if the statement is true. Otherwise, select No. NOTE: Each correct selection is worth one point. Hot Area:

If User1 signs in to Azure from a device that uses an IP address of 134.18.14.10, User1 must be authenticated by using a phone.

If User2 signs in to Azure from a device in the Seattle office, User2 must be authenticated by using the Microsoft Authenticator app.

If User2 signs in to Azure from a device in the New York office, User2 must be authenticated by using a phone.

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution. After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen. You have a hybrid configuration of Azure Active Directory (Azure AD). You have an Azure HDInsight cluster on a virtual network. You plan to allow users to authenticate to the cluster by using their on-premises Active Directory credentials. You need to configure the environment to support the planned authentication. Solution: You deploy Azure Active Directory Domain Services (Azure AD DS) to the Azure subscription. Does this meet the goal?

You plan to use Azure Resource Manager templates to perform multiple deployments of identically configured Azure virtual machines. The password for the administrator account of each deployment is stored as a secret in different Azure key vaults. You need to identify a method to dynamically construct a resource ID that will designate the key vault containing the appropriate secret during each deployment. The name of the key vault and the name of the secret will be provided as inline parameters. What should you use to construct the resource ID?

You have an Azure subscription that contains an Azure key vault named Vault1. In Vault1, you create a secret named Secret1. An application developer registers an application in Azure Active Directory (Azure AD). You need to ensure that the application can use Secret1. What should you do?

HOTSPOT - You have an Azure subscription named Sub1. You create a virtual network that contains one subnet. On the subnet, you provision the virtual machines shown in the following table. Name Network interface Application security group assignment IP address VM1 NIC1 AppGroup12 10.0.0.10 VM2 NIC2 AppGroup12 10.0.0.11 VM3 NIC3 AppGroup3 10.0.0.100 VM4 NIC4 AppGroup4 10.0.0.200 Currently, you have not provisioned any network security groups (NSGs). You need to implement network security to meet the following requirements: ✑ Allow traffic to VM4 from VM3 only. ✑ Allow traffic from the Internet to VM1 and VM2 only. ✑ Minimize the number of NSGs and network security rules. How many NSGs and network security rules should you create? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point. Hot Area:

NSGs: ______

Network security rules: ______

HOTSPOT - What is the membership of Group1 and Group2? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point. Hot Area:

Group1: ______

Group2: ______

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution. After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen. You have an Azure subscription named Sub1. You have an Azure Storage account named sa1 in a resource group named RG1. Users and applications access the blob service and the file service in sa1 by using several shared access signatures (SASs) and stored access policies. You discover that unauthorized users accessed both the file service and the blob service. You need to revoke all access to sa1. Solution: You generate new SASs. Does this meet the goal?

Your company has an Azure subscription named Sub1 that is associated to an Azure Active Directory (Azure AD) tenant named contoso.com. The company develops an application named App1. App1 is registered in Azure AD. You need to ensure that App1 can access secrets in Azure Key Vault on behalf of the application users. What should you configure?

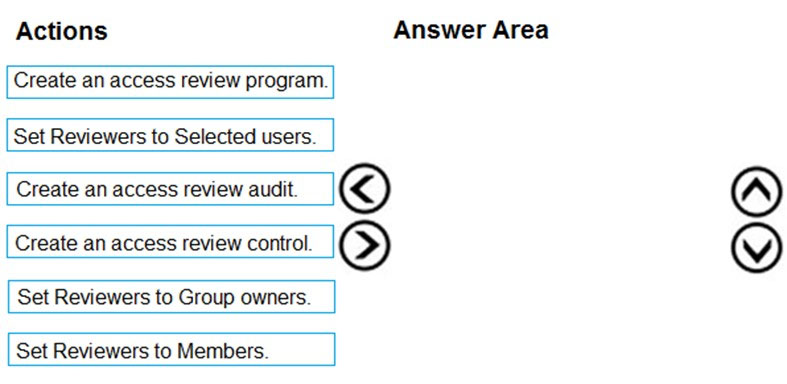

DRAG DROP - You need to configure an access review. The review will be assigned to a new collection of reviews and reviewed by resource owners. Which three actions should you perform in sequence? To answer, move the appropriate actions from the list of actions to the answer area and arrange them in the correct order. Select and Place:

Select the correct answer(s) in the image below.

Your company plans to create separate subscriptions for each department. Each subscription will be associated to the same Azure Active Directory (Azure AD) tenant. You need to configure each subscription to have the same role assignments. What should you use?

HOTSPOT - You have two Azure virtual machines in the East US 2 region as shown in the following table. Name Operating system Type Tier VM1 Windows Server 2008 R2 A3 Basic VM2 Ubuntu 16.04-DAILY-LTS L4s Standard You deploy and configure an Azure Key vault. You need to ensure that you can enable Azure Disk Encryption on VM1 and VM2. What should you modify on each virtual machine? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point. Hot Area:

VM1 ______

VM2 ______

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution. After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen. You have an Azure subscription. The subscription contains 50 virtual machines that run Windows Server 2012 R2 or Windows Server 2016. You need to deploy Microsoft Antimalware to the virtual machines. Solution: You add an extension to each virtual machine. Does this meet the goal?

You have an Azure subscription that contains a virtual machine named VM1. You create an Azure key vault that has the following configurations: ✑ Name: Vault5 ✑ Region: West US ✑ Resource group: RG1 You need to use Vault5 to enable Azure Disk Encryption on VM1. The solution must support backing up VM1 by using Azure Backup. Which key vault settings should you configure?

You have an Azure web app named webapp1. You need to configure continuous deployment for webapp1 by using an Azure Repo. What should you create first?

HOTSPOT - You have an Azure subscription. The subscription contains Azure virtual machines that run Windows Server 2016. You need to implement a policy to ensure that each virtual machine has a custom antimalware virtual machine extension installed. How should you complete the policy? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point. Hot Area:

"effect": "______"

"parameters": { "______": {

Other Microsoft Certifications

Get the app