Practice Test #2

Simulate the real exam experience with 50 questions and a 100-minute time limit. Practice with AI-verified answers and detailed explanations.

AI-Powered

Triple AI-Verified Answers & Explanations

Every answer is cross-verified by 3 leading AI models to ensure maximum accuracy. Get detailed per-option explanations and in-depth question analysis.

Practice Questions

HOTSPOT - You need to design a storage solution for an app that will store large amounts of frequently used data. The solution must meet the following requirements: ✑ Maximize data throughput. ✑ Prevent the modification of data for one year. ✑ Minimize latency for read and write operations. Which Azure Storage account type and storage service should you recommend? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point. Hot Area:

Storage account type: ______

Storage service: ______

You have an Azure Active Directory (Azure AD) tenant that syncs with an on-premises Active Directory domain. You have an internal web app named WebApp1 that is hosted on-premises. WebApp1 uses Integrated Windows authentication. Some users work remotely and do NOT have VPN access to the on-premises network. You need to provide the remote users with single sign-on (SSO) access to WebApp1. Which two features should you include in the solution? Each correct answer presents part of the solution. NOTE: Each correct selection is worth one point.

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution. After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen. You need to deploy resources to host a stateless web app in an Azure subscription. The solution must meet the following requirements: ✑ Provide access to the full .NET framework. Provide redundancy if an Azure region fails.

✑ Grant administrators access to the operating system to install custom application dependencies. Solution: You deploy two Azure virtual machines to two Azure regions, and you create an Azure Traffic Manager profile. Does this meet the goal?

By default, HTTPS traffic is allowed in NSG outbound security rules.

VPN Gateway is required to connect Azure premises to on-premises.

Azure Load Balancer supports inbound and outbound scenarios.

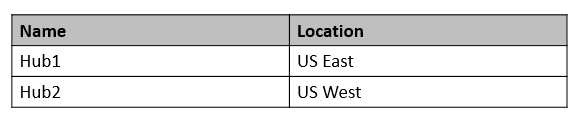

You have an Azure subscription that contains a Basic Azure virtual WAN named VirtualWAN1 and the virtual hubs shown in the following table.

You have an ExpressRoute circuit in the US East Azure region. You need to create an ExpressRoute association to VirtualWAN1. What should you do first?

You have an Azure subscription that contains two applications named App1 and App2. App1 is a sales processing application. When a transaction in App1 requires shipping, a message is added to an Azure Storage account queue, and then App2 listens to the queue for relevant transactions. In the future, additional applications will be added that will process some of the shipping requests based on the specific details of the transactions. You need to recommend a replacement for the storage account queue to ensure that each additional application will be able to read the relevant transactions. What should you recommend?

Want to practice all questions on the go?

Download Cloud Pass — includes practice tests, progress tracking & more.

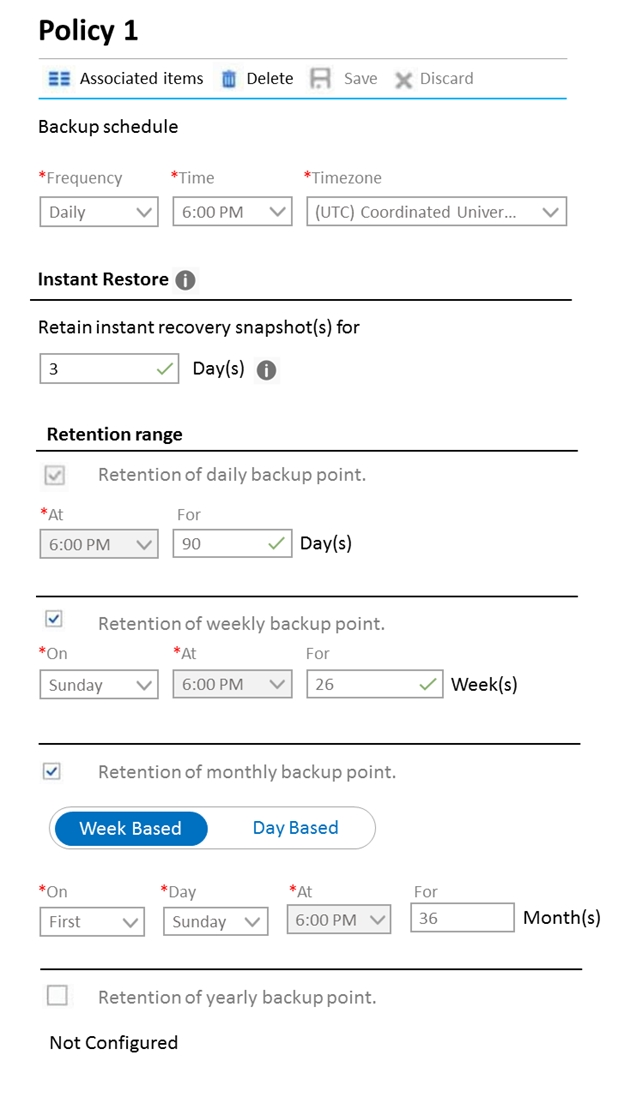

HOTSPOT - You plan to deploy the backup policy shown in the following exhibit.

Use the drop-down menus to select the answer choice that completes each statement based on the information presented in the graphic. NOTE: Each correct selection is worth one point. Hot Area:

Select the correct answer(s) in the image below.

Virtual machines that are backed up by using the policy can be recovered for up to a maximum of ______:

The minimum recovery point objective (RPO) for virtual machines that are backed up by using the policy is ______:

You have SQL Server on an Azure virtual machine. The databases are written to nightly as part of a batch process. You need to recommend a disaster recovery solution for the data. The solution must meet the following requirements: ✑ Provide the ability to recover in the event of a regional outage. ✑ Support a recovery time objective (RTO) of 15 minutes. ✑ Support a recovery point objective (RPO) of 24 hours. ✑ Support automated recovery. ✑ Minimize costs. What should you include in the recommendation?

HOTSPOT - You are designing an Azure App Service web app. You plan to deploy the web app to the North Europe Azure region and the West Europe Azure region. You need to recommend a solution for the web app. The solution must meet the following requirements: ✑ Users must always access the web app from the North Europe region, unless the region fails. ✑ The web app must be available to users if an Azure region is unavailable. ✑ Deployment costs must be minimized. What should you include in the recommendation? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point. Hot Area:

Request routing method: ______

Request routing configuration: ______

You have an Azure subscription that contains a storage account. An application sometimes writes duplicate files to the storage account. You have a PowerShell script that identifies and deletes duplicate files in the storage account. Currently, the script is run manually after approval from the operations manager. You need to recommend a serverless solution that performs the following actions: ✑ Runs the script once an hour to identify whether duplicate files exist ✑ Sends an email notification to the operations manager requesting approval to delete the duplicate files ✑ Processes an email response from the operations manager specifying whether the deletion was approved ✑ Runs the script if the deletion was approved What should you include in the recommendation?

HOTSPOT - Your company has 20 web APIs that were developed in-house. The company is developing 10 web apps that will use the web APIs. The web apps and the APIs are registered in the company s Azure Active Directory (Azure AD) tenant. The web APIs are published by using Azure API Management. You need to recommend a solution to block unauthorized requests originating from the web apps from reaching the web APIs. The solution must meet the following requirements: ✑ Use Azure AD-generated claims. Minimize configuration and management effort.

What should you include in the recommendation? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point. Hot Area:

Select the correct answer(s) in the image below.

Grant permissions to allow the web apps to access the web APIs by using: ______

Configure a JSON Web Token (JWT) validation policy by using: ______

Get the app