Practice Test #1

Simulate the real exam experience with 50 questions and a 100-minute time limit. Practice with AI-verified answers and detailed explanations.

AI-Powered

Triple AI-Verified Answers & Explanations

Every answer is cross-verified by 3 leading AI models to ensure maximum accuracy. Get detailed per-option explanations and in-depth question analysis.

Practice Questions

HOTSPOT - You plan to deploy an Azure web app named App1 that will use Azure Active Directory (Azure AD) authentication. App1 will be accessed from the internet by the users at your company. All the users have computers that run Windows 10 and are joined to Azure AD. You need to recommend a solution to ensure that the users can connect to App1 without being prompted for authentication and can access App1 only from company-owned computers. What should you recommend for each requirement? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point. Hot Area:

The users can connect to App1 without being prompted for authentication: ______

The users can access App1 only from company-owned computers: ______

You need to recommend a solution to generate a monthly report of all the new Azure Resource Manager (ARM) resource deployments in your Azure subscription. What should you include in the recommendation?

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution. After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen. Your company deploys several virtual machines on-premises and to Azure. ExpressRoute is deployed and configured for on-premises to Azure connectivity. Several virtual machines exhibit network connectivity issues. You need to analyze the network traffic to identify whether packets are being allowed or denied to the virtual machines. Solution: Use Azure Network Watcher to run IP flow verify to analyze the network traffic. Does this meet the goal?

You are designing an application that will aggregate content for users. You need to recommend a database solution for the application. The solution must meet the following requirements: ✑ Support SQL commands. ✑ Support multi-master writes. ✑ Guarantee low latency read operations. What should you include in the recommendation?

You have the Azure resources shown in the following table.

You need to deploy a new Azure Firewall policy that will contain mandatory rules for all Azure Firewall deployments. The new policy will be configured as a parent policy for the existing policies. What is the minimum number of additional Azure Firewall policies you should create?

US-Central-Firewall-policy is of type Azure Firewall policy and located in Central US.

US-East-Firewall-policy is of type Azure Firewall policy and located in East US.

EU-Firewall-policy is of type Azure Firewall policy and located in West Europe.

USEastfirewall is of type Azure Firewall and located in Central US.

USWestfirewall is of type Azure Firewall and located in East US.

EUFirewall is of type Azure Firewall and located in West Europe.

Want to practice all questions on the go?

Download Cloud Pass — includes practice tests, progress tracking & more.

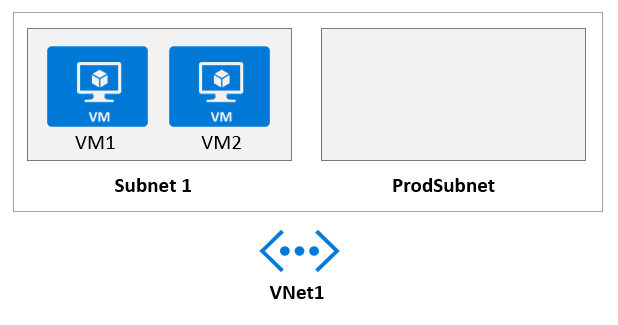

HOTSPOT - Your company develops a web service that is deployed to an Azure virtual machine named VM1. The web service allows an API to access real-time data from VM1. The current virtual machine deployment is shown in the Deployment exhibit.

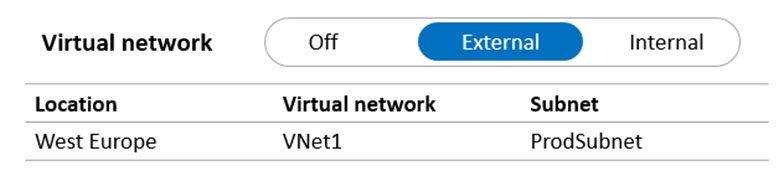

The chief technology officer (CTO) sends you the following email message: "Our developers have deployed the web service to a virtual machine named VM1. Testing has shown that the API is accessible from VM1 and VM2. Our partners must be able to connect to the API over the Internet. Partners will use this data in applications that they develop." You deploy an Azure API Management (APIM) service. The relevant API Management configuration is shown in the API exhibit.

For each of the following statements, select Yes if the statement is true. Otherwise, select No. NOTE: Each correct selection is worth one point. Hot Area:

Select the correct answer(s) in the image below.

The API is available to partners over the internet.

The APIM instance can access real-time data from VM1.

A VPN gateway is required for partner access.

You are developing a sales application that will contain several Azure cloud services and handle different components of a transaction. Different cloud services will process customer orders, billing, payment, inventory, and shipping. You need to recommend a solution to enable the cloud services to asynchronously communicate transaction information by using XML messages. What should you include in the recommendation?

You need to design a highly available Azure SQL database that meets the following requirements: ✑ Failover between replicas of the database must occur without any data loss. ✑ The database must remain available in the event of a zone outage. ✑ Costs must be minimized. Which deployment option should you use?

You have a .NET web service named Service1 that performs the following tasks: ✑ Reads and writes temporary files to the local file system. ✑ Writes to the Application event log. You need to recommend a solution to host Service1 in Azure. The solution must meet the following requirements: ✑ Minimize maintenance overhead. ✑ Minimize costs. What should you include in the recommendation?

You plan to deploy an Azure SQL database that will store Personally Identifiable Information (PII). You need to ensure that only privileged users can view the PII. What should you include in the solution?

Get the app